For most of us it seems almost impossible to go about our daily lives without the internet. In fact, almost 80% of households in the US are connected to the internet. The average American household has approximately 15 connected devices, most of which have video screens. In recent years, we have grown accustomed to constant connectivity on the go and have witnessed the expansion of technology into nearly every aspect of home and personal care, from speakers to appliances to exercise equipment.

And then came the COVID-19 pandemic. People drastically changed the way they worked, did their schooling, and interacted with friends. Demands on the network skyrocketed – there was a 47% increase in bandwidth usage between March of 2019 and March of 2020, driving many consumers to frustration with their inadequate network performance and inability to access the information they needed quickly.

This sudden, massive spike in demand increased the urgency behind large-scale network infrastructure buildout efforts that were already underway, and will join with other factors (e.g., low latency requirements) to stimulate development in emerging technology areas such as edge computing, 5G, and low-earth orbit satellites.

An insatiable need for data

The two primary culprits behind our massively increased bandwidth consumption are video streaming and IoT. Video streaming services make up 50% to 75% of network traffic in urban and rural areas, respectively. The data used by customers of “big streamers” such as Netflix, YouTube, Disney+, Hulu and others is expected to increase at least 30% annually over the coming years. Meanwhile, although individual devices like smart appliances or video doorbells do not consume an overwhelming amount of data, the cumulative bandwidth needed for their communication really adds up if used simultaneously.

Internet service providers such as AT&T and Lumen are constantly upgrading old copper connections to fiber optic cabling and connecting more homes with higher bandwidth, but even these ongoing improvements won’t be enough to provide satisfactory service in the era of pervasive connectivity. The average household today can function with between 35 and 80 Mbps download speeds, but this minimum requirement is expected to rise to thousands of Mbps (Gbps) by 2030. Upload speeds will also need to increase dramatically – with the rise of applications such as AR/VR and the expansion of telemedicine, homes will need to be equipped to send large amounts of data through the internet, causing upload speeds to become nearly symmetrical with download.

Moving to the edge

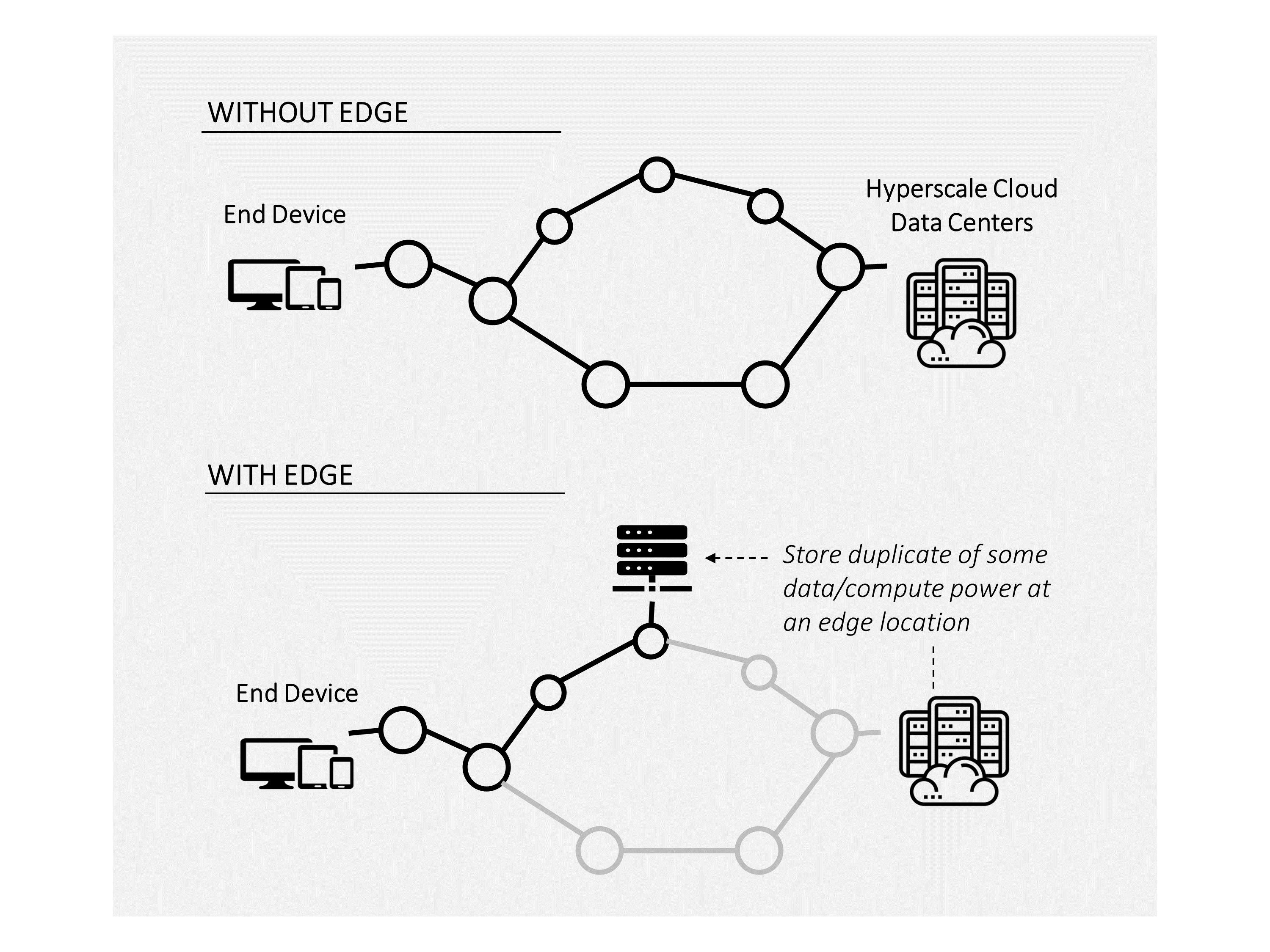

One potential solution to this issue is edge computing, which involves the distribution of computing power and data storage to locations within the network that are closer to end users. Today, executing a task like a Google search requires sending a request from the user’s end device through a series of nodes and connections all the way to a centralized hyperscale cloud data center where the desired data is stored. Then, the data packet must travel back again to the end user’s device.

Under normal conditions, this journey happens so fast it’s barely noticeable. But as more and more people and devices attempt to accomplish more and more computing tasks, the routes to the hyperscale cloud data center get crowded, and the data’s journey takes longer – sort of like traveling during rush hour. Users experience this data traffic jam as application latency (i.e., things taking longer to load).

Moving capacity from central cloud data centers to smaller data caches near the periphery of the internet backbone reduces strain on the network by limiting the distance and number of nodes that the data request needs to pass through. This type of distribution is already used in content delivery networks (CDNs), which bring stored data closer to the user for activities such as video and music streaming. Most of the hardware and operating platforms at edge locations are the same as the cloud in terms of end user experience, which makes life easier for app developers, and running even a portion of computing workload at an edge node increases the perceived speed of the application for the user.

Edge computing is complementary to the current cloud infrastructure, and both will have a role to play going forward. For example, the stream from a video doorbell feed can be analyzed at a regional data center while the important moments (like a package delivery) are sent to the cloud for storage. Similarly, the latest Netflix movie release can be pushed from the Amazon hyperscale data centers on the east and west coasts to points of internet service across the US and around the world, enabling millions of viewers to access these movies quickly while limiting traffic congestion in the network. These win-win situations that benefit players throughout the ecosystem explain many of the recent partnership announcements between network operators, cloud service providers and streaming services.

Always connected, everywhere

Another solution for our need to be constantly connected is wireless connectivity, which can come in the form of 5G mobile connection or satellite internet service. These two technologies generally serve very different needs and populations, with 5G providing on-the-go data access in dense urban areas while satellite networks deliver reliable internet in homes that are difficult to reach with terrestrial connections (e.g., in rural areas), but both are receiving significant interest and investment from major industry players as consumers demand more and more bandwidth.

5G mobile connectivity is deployed using millimeter wavelength signals that bounce between a network of towers acting as booster stations to relay a signal. It is superior to previous generations of wireless data because the frequency of the waves used to transmit data is higher, which allows for more bandwidth; the tradeoff is that they can only travel short distances. That’s why 5G roll-out is focused on urban areas where booster stations can be placed every 1,000 meters or so, helping to propagate these high frequency waves around the city.

Given its wavelength limitations, 5G is unlikely to be a feasible connectivity solution outside of densely populated areas. This is a shame, considering that the greatest divide in internet access is seen in rural communities. Reliable access to the internet has become central for participation in the economy, creating rich social fabric and education systems at all levels, and yet almost 35% of households – mostly located in rural regions – are unable to get high-speed internet access (and this number is likely underreported).

In an attempt to close the rural digital divide, the FCC has created the Rural Digital Opportunity Fund. Over the next ten years, this fund will distribute more than $20 billion to network operators to subsidize the implementation of new infrastructure in underserved rural regions. The vast majority of this funding will go to traditional copper and fiber installations, but some is already being directed toward another emerging connectivity solution: innovative satellite internet service.

Traditional geostationary (GEO) satellites have been used for TV and internet services like Dish or Direct TV for many years, but network speeds are slow and data limits may be prohibitive. A new technology – low earth orbit satellites, or LEOS – resolves these issues by deploying satellites closer to the earth, enabling delivery of true high-speed internet via a complex network of other satellites and ground stations. LEOS networks are constrained in terms of bandwidth per area and are therefore not suitable for use in population-dense urban centers, but they could be a credible competitive threat to cable providers in rural areas.

Until recently, LEOS technology was unproven; within the last year, however, Elon Musk’s Starlink network has successfully demonstrated delivery of high-speed internet after several months of beta testing with users around the country. The FCC has been supportive of this new technology, awarding Starlink ~$880M of the Rural Digital Opportunity Fund, which could entice other LEOS players to continue building out their own constellations. Although Starlink is far ahead of the competition, there is room for improvement in constellation layout, satellite technology, and the antenna receivers for these wireless signals; this technology will likely be a site of significant innovation activity over the coming years.

As we continue to adopt more devices in our homes, engage in bandwidth-hungry applications like telemedicine and AR/VR, and demand the ability to work from anywhere, we will stimulate continued development of these technologies. Compute power will be distributed across the network, across our devices, and will generally increase to allow us to do more online than we’ve ever done before. The COVID-19 pandemic has accelerated this technological evolution and our insatiable need for connectedness is not stopping any time soon. We will continue to see robust innovation in networking technology as we reach a new normal of pervasive connectivity.

Find out how Newry can help your organization anticipate future needs and stay ahead of disruptions. Don’t wait until you’re left behind.